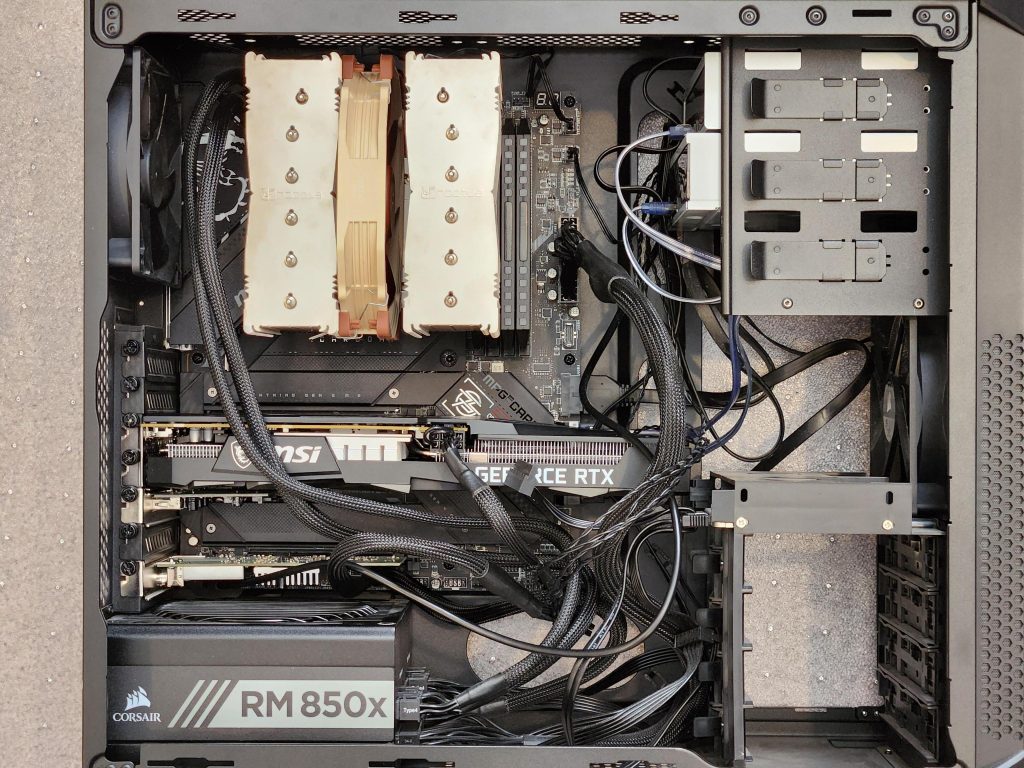

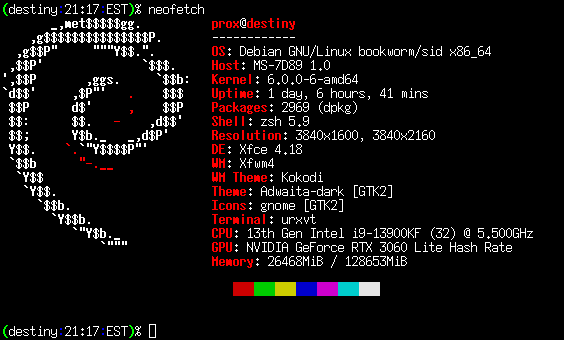

I finally decided to upgrade my Linux workstation this past week. I went for the following specifications:

- Intel Core i9-13900KF CPU

- MSI GeForce RTX 3600 GPU

- MSI MPG Z790 Carbon Motherboard

- 128GiB Corsair Dominator Platinum DDR5 5200MHz RAM

- Noctua NH-D15S CPU Cooler

- Samsung NVMe 980 PRO 2TB SSD

And, I reused the following from my previous build (originally a Core i9-9900K):

- Corsair Carbide 200R Case

- Corsair RM850x Power Supply

- Crucial SATA MX500 4TB SSD

- 2x Western Digital WDC WD40EZRZ-22GXCB0 4TB HDD via USB

- Pioneer SATA XL BD-RW

- LG SATA BD-DR

- Hauppage PCI-e 4x DVB/HDTV Tuner

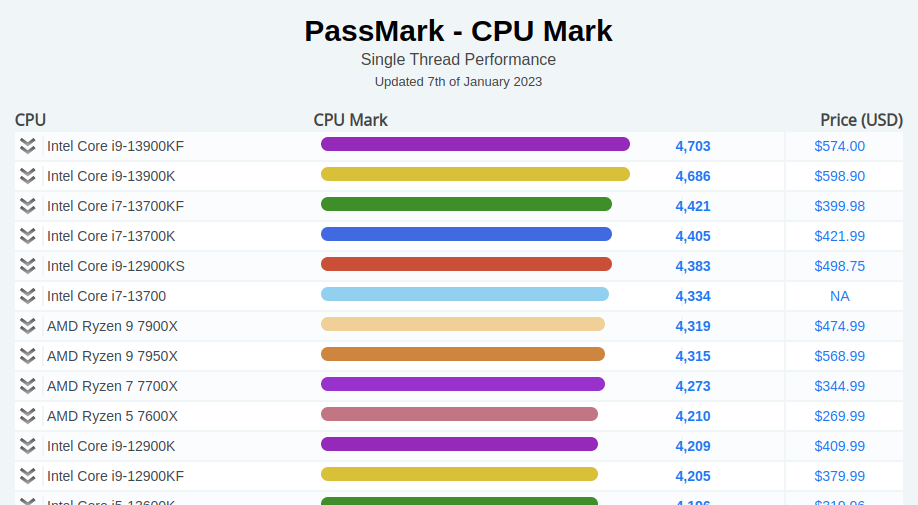

- USB 3.0 PCI-e Adapter

I didn’t go for water cooling and also used the NA-RC7 “low noise” adapter to make the CPU fan spin slower and therefore not make as much noise. I wasn’t going to overclock so I figured this would be fine since the NH-D15S was a beast of a heatsink. I don’t game at all but wanted a mid-range GPU in case I decided to do anything more interesting than Google Earth and I picked the i9-13900KF because it has the best single-thread performance (the criteria for my last build, too):

I still don’t know why the KF is faster than the K variant. K means unlocked multipler and F means without integrated graphics. Supposely since there is no heat & power consumed by the GPU on the KF series, it can overclock more than the K variant. I’m not sure if it fully explains it, though. The i9-12900K slightly edges out the i9-12900KF farther down the list but that is well within the margin of error of PassMark’s testing, I’d think.

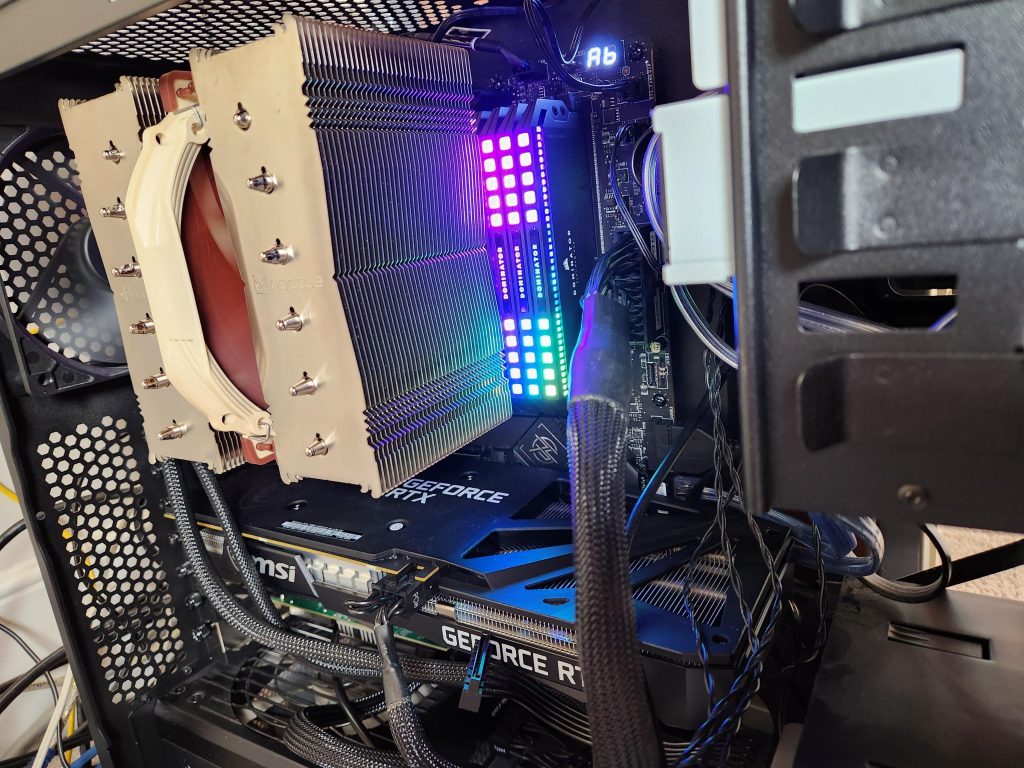

The machine doesn’t actually sit on my desk so I don’t care about any kind of flashy RGB stuff but it seemed to be impossible to find premium RAM that didn’t have some of LEDs on it (in addition to the motherboard). Here’s the Corsair RAM being “blingy”:

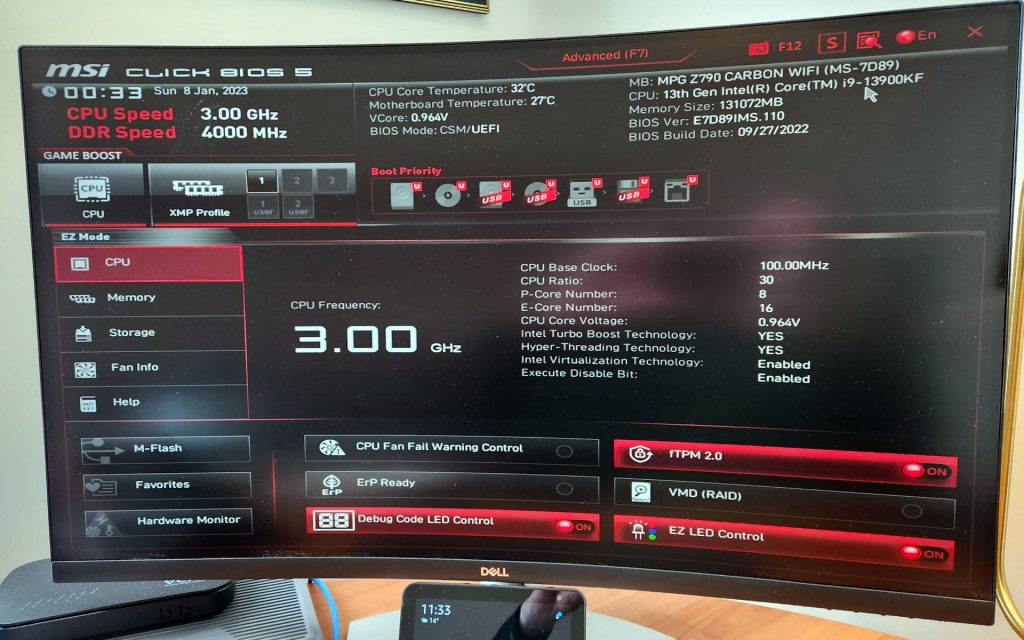

The build went fine, overall. I think I could have done a better job applying thermal paste but meh. The MSI BIOS quickly indicated that all my stuff was working as expected. I did notice that the RAM frequency was 4000 MHz but the RAM itself was spec’ed at 5200 MHz. I found out later that the 5200 MHz is a [sanctioned] OC specification (Intel XMP) so I’m fine with 4000 MHz as long as things are stable.

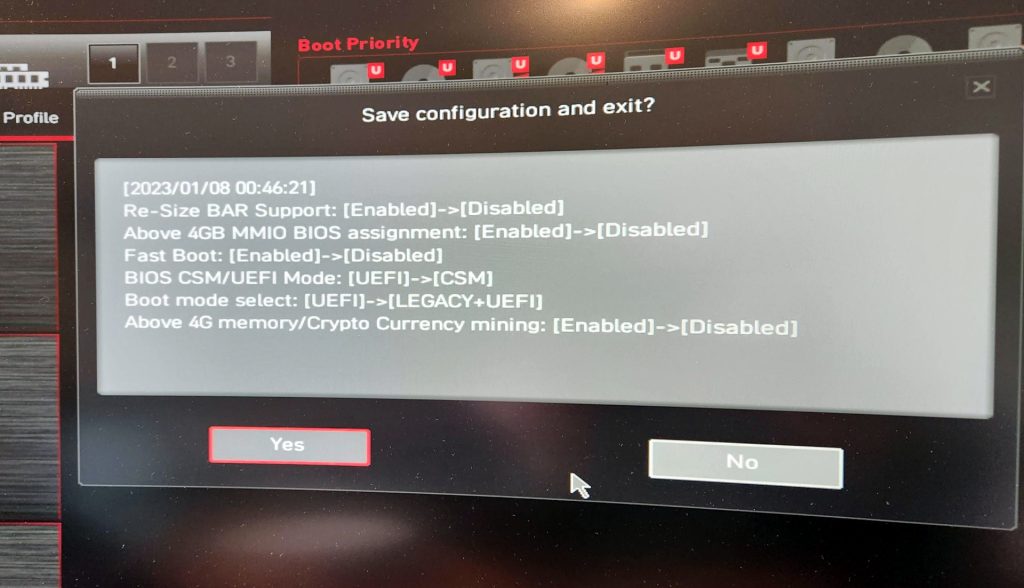

I did end up changing to legacy boot because I didn’t see any reason to change from grub-pc to grub-efi (I have no use for secure boot). The MSI BIOS flipped some other options when I did that:

I initially booted my Debian install from the original NVMe SSD connected by a USB converter, which surprisingly went very well (albeit a bit slow). I then used a Knoppix live DVDUSB to clone the first few MiB of the disk (for GRUB) and then recreated all filesystems for the 2TB SSD and rsync’ed content over (and.. forgetting the -p in rsync, so I had to flip the setuid bit on ping and mtr!).

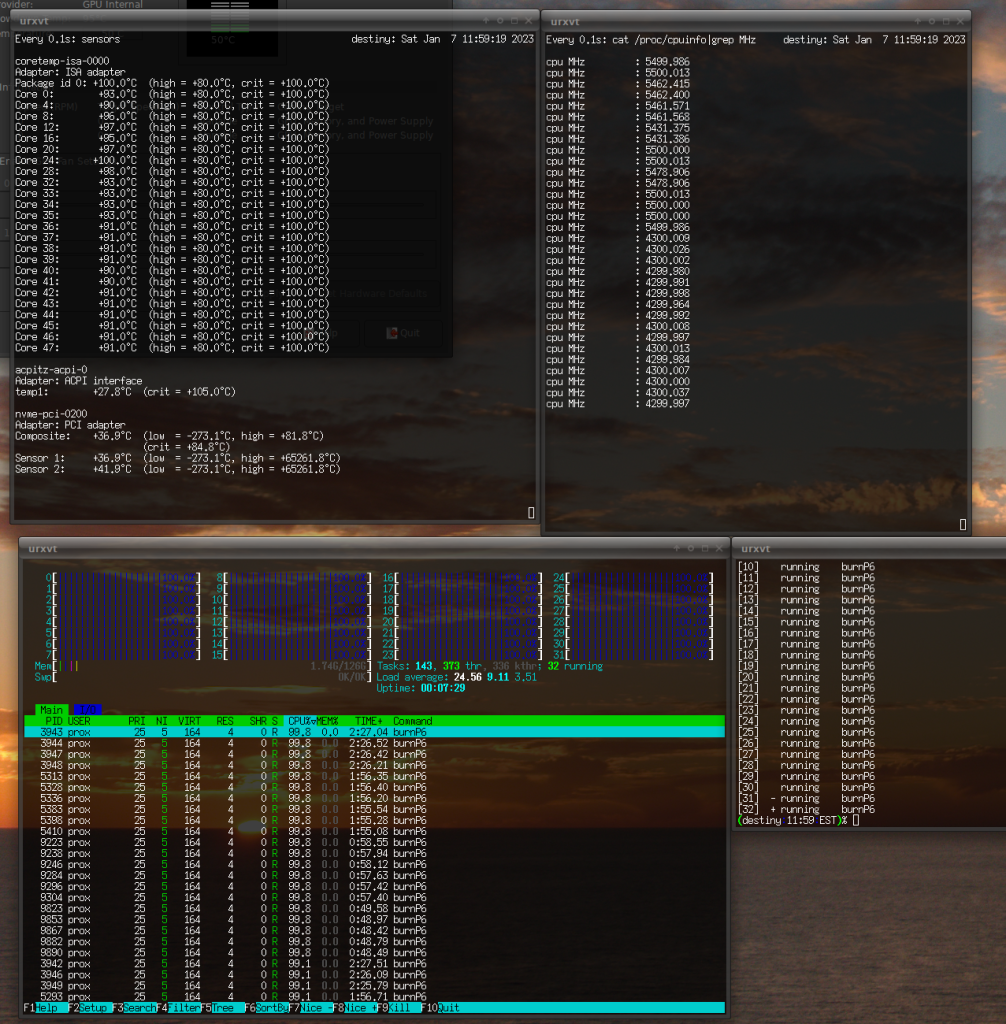

The Noctua cooler works fairly well although things get pretty toasty if I load up 32 processes of burnP6 and let it sit for a few minutes:

There are a few interesting things above that I noticed after the fact. First, the way the 16x E-cores vs. 8x P-cores are enumerated in Linux is interesting. The P-cores are listed first and are core IDs 0,4,8,12,16,20,24,28. The E-cores are 32 through 47. I don’t know why the P-cores skip 4x IDs but the sysfs enumeration is even weirder because it breaks out threads, which are only supported in the P-cores.

(destiny:20:57:EST)% for i in $(seq 0 31); do echo -n "${i}: "; echo -n "Core ID #"; cat /sys/devices/system/cpu/cpu${i}/topology/core_id; done

0: Core ID #0 // P-core

1: Core ID #0 // P-core

2: Core ID #4 // P-core

3: Core ID #4 // P-core

4: Core ID #8 // P-core

5: Core ID #8 // P-core

6: Core ID #12 // P-core

7: Core ID #12 // P-core

8: Core ID #16 // P-core

9: Core ID #16 // P-core

10: Core ID #20 // P-core

11: Core ID #20 // P-core

12: Core ID #24 // P-core

13: Core ID #24 // P-core

14: Core ID #28 // P-core

15: Core ID #28 // P-core

16: Core ID #32 // E-core

17: Core ID #33 // E-core

18: Core ID #34 // E-core

19: Core ID #35 // E-core

20: Core ID #36 // E-core

21: Core ID #37 // E-core

22: Core ID #38 // E-core

23: Core ID #39 // E-core

24: Core ID #40 // E-core

25: Core ID #41 // E-core

26: Core ID #42 // E-core

27: Core ID #43 // E-core

28: Core ID #44 // E-core

29: Core ID #45 // E-core

30: Core ID #46 // E-core

31: Core ID #47 // E-core

I’ve annotated which is a P-core vs. E-core. I’m still not clear on how the Linux kernel really decides what tasks to throw at E-cores vs. P-cores and while looking at htop as I use the workstation it seems that everything’s just treated equally. Maybe it’s because the INTEL_HFI stuff is not fully integrated yet. I did notice that the 6.0.12 kernel that’s current on Debian testing at time of writing does not have INTEL_HFI_THERMAL enabled, which might help (or make things worse since the E-cores run at a lower clock speed?). I’ve played around with turning on / off all of the E-cores and most of the P-cores (minus cpu0, which is a P-core, and cannot be disabled) but haven’t really concluded anything concrete about powersaving vs. performance.

Second, this is the first time I’ve seen a core on a desktop PC of mine reach 100°C. I’m guessing that this resulted in some throttling (cpuinfo shows 5478.906 MHz for that core ID so I’m not sure how much). Maybe if I had opted for water cooling (or removed the “low noise” adapter!) it wouldn’t have gotten so hot.

While I’m not going to use this sytem for gaming I did notice that the RTX 3060 is crippled and will detect ETH mining:

(destiny:21:09:EST)% lspci|grep VGA 01:00.0 VGA compatible controller: NVIDIA Corporation GA106 [GeForce RTX 3060 Lite Hash Rate] (rev a1)

Apparently only the first RTX 30xx cards produced did not have this restriction but all of the current ones do. I don’t really care but I don’t like the hardware I buy to be encumbered for silly reasons.

All-in-all this feels like a good upgrade and should last 4-5 years like my last i9-9900K build, which was done toward the end of 2018.